Every decision you make as a solo founder costs you twice.

Once in the deciding — the mental energy of weighing options, running scenarios, sitting with uncertainty. And once in the aftermath — the second-guessing, the what-ifs, the slow dawning realisation months later that you could have seen the warning signs earlier if you'd known what to look for.

Decision quality deteriorates as the day progresses, yet solo founders must continue making critical choices well into exhaustion. Solo founders often get stuck in decision loops — moving too slowly out of fear, or too quickly out of panic — because there's no co-founder to pressure-test thinking, no management team to surface concerns, no board to push back before a decision is locked in.

The result is predictable: founders make the same categories of decision repeatedly — should I build this? should I hire? should I pivot? — and each time, they reconstruct the decision framework from scratch. Same anxiety. Same blind spots. Same missing variables discovered too late.

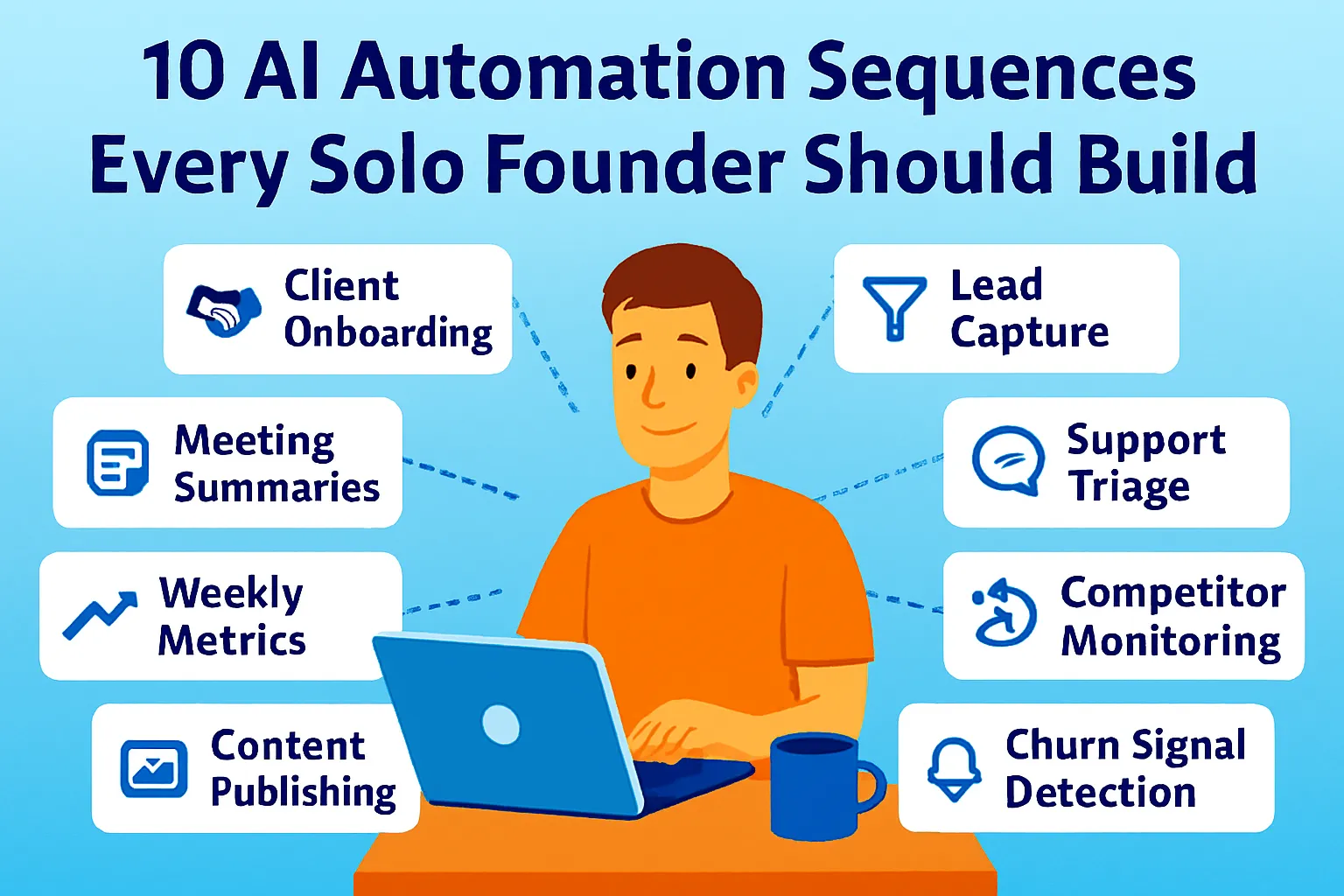

This article builds a standing decision system: three prompt templates for the decisions that come up most often, a quick triage test to route decisions to the right template, and a daily decision log that turns every call into institutional knowledge you can draw on next time.

The difference between this and a general strategic thinking framework: this one is built for speed at the operational level. Not the big-bet annual decisions that deserve a pre-mortem and four lenses — the medium-stakes recurring calls that arrive weekly, demand an answer within 24-48 hours, and benefit from structure without ceremony.

The Decision Triage Test

Before running any prompt, route the decision to the right category. Thirty seconds of triage prevents ten minutes of applying the wrong framework.

DECISION TRIAGE

Answer these three questions:

1. Is this decision primarily about

WHAT TO BUILD or WHAT TO ADD?

→ Use the Build/Add/Defer prompt

2. Is this decision primarily about

PEOPLE — hiring, contractors, partnerships?

→ Use the People Decision prompt

3. Is this decision primarily about

DIRECTION — channel, product, strategy?

→ Use the Pivot/Persist prompt

If it spans more than one category:

Start with the category where the

risk is highest.

Run the second prompt after the first

if you're still uncertain.

If none of these fit:

This is likely either:

a) A tactical decision that doesn't

need a framework — just decide

b) A major strategic bet that needs

deeper analysis than these prompts provide

The fast/slow split:

Before any prompt, one question that determines how much process the decision deserves:

"If I get this wrong, can I recover within 30 days at low cost?"

Yes → Decide fast. Act. Observe. Adjust. No framework needed. No → Use the appropriate prompt template. Take 24 hours minimum.

The 24-hour rule for non-trivial decisions is worth encoding as a personal policy: for any decision that costs more than $500 or commits more than 20 hours, a mandatory 24-hour gap between forming a view and acting on it. The decision that seemed obvious at 9 PM frequently looks different at 9 AM. This gap is not delay — it's a filter that catches the choices driven by the emotion of the moment rather than the logic of the situation.

Template 1: The Build/Add/Defer Decision

The most frequent product decision a solo founder makes: something new is on the table. A feature request from a customer. An integration that would add value. A whole new product direction. A tool or automation to build. The question is always some version of: should I build this, and if so, when?

The failure mode: building based on one enthusiastic customer's request, or deferring everything out of a vague sense that the timing isn't right, or adding features that expand scope without expanding value.

Should I build/add/defer this?

WHAT IT IS:

[Describe the feature, tool, integration,

or new product in one clear sentence]

WHAT PROMPTED THIS:

[Where did this idea come from?

Customer request / Competitor feature /

Your own idea / Contractor suggestion]

MY CURRENT STATE:

MRR: $[X]

Active customers: [N]

Hours per week available: [X]

Current build backlog: [What else is queued]

Run the BUILD/ADD/DEFER test:

1. PROBLEM VALIDATION:

Does this address a problem that

multiple customers have described

in their own words — or one person's

feature request?

Signal threshold: 3+ customers

independently naming the same pain =

worth building. Fewer = probably not yet.

2. REVENUE TEST:

Will building this:

a) Prevent churn (retention play)

b) Enable new customer acquisition

(growth play)

c) Justify a price increase (monetization play)

d) None of the above (nice to have)

Only a, b, or c justify build priority.

D = defer.

3. OPPORTUNITY COST:

What do I NOT build or work on this week

if I build this?

Name it specifically — not "other things."

Is what I'm giving up more or less

valuable than what I'm building?

4. SMALLEST VIABLE VERSION:

What's the version of this that

takes 20% of the effort and delivers

60% of the value?

Could I test demand for this with

a manual workaround before building anything?

5. TIMING:

Does building this in the next 30 days

change anything meaningfully?

Or would building it in 90 days

produce the same outcome?

If 90 days produces the same outcome: defer.

VERDICT:

BUILD NOW — if: problem validated,

revenue-connected, opportunity cost acceptable

ADD NEXT QUARTER — if: validated but

not urgent, or opportunity cost too high now

DEFER INDEFINITELY — if: one person's request,

no revenue connection, or smaller version

solves it without building

DON'T BUILD — if: nice to have,

expands scope without expanding value

Output: Verdict with one-paragraph reasoning.

What specifically changes this verdict?

The "one customer asked" warning:

One enthusiastic customer request is not validation. It's a hypothesis. The prompt's threshold — three customers independently naming the same pain — exists because founders systematically overweight the most recent feedback they received. The customer who asked yesterday feels more important than the ten customers who never mentioned it. That's recency bias, not signal.

Before building anything based on a single request: ask five other customers if they've experienced the same problem. Unprompted yes from three of them = signal. Crickets from five = the one person who asked was an outlier.

Template 2: The People Decision

The second most costly recurring decision: anything involving a person. Hire a contractor, bring on a part-time VA, take on a strategic partner, give a referral partner an ongoing commission arrangement. Every people decision has higher stakes than it appears — relationship context, management overhead, and the difficulty of reversing the decision once a human is involved.

The failure mode: hiring based on a feeling of overwhelm rather than a specific, measurable gap; or deferring hiring indefinitely because the role "isn't quite ready" when the real reason is anxiety about management overhead.

Should I make this people decision?

THE DECISION:

[Hire / engage / partner with / commission —

describe the specific role or arrangement]

WHAT THEY WOULD DO:

[List the specific recurring tasks or

deliverables — not "help with marketing"

but the three actual tasks]

WHAT PROMPTED THIS:

[What's happening that made you consider this?

Be honest: overwhelm / specific bottleneck /

specific opportunity / social pressure]

Run the PEOPLE DECISION test:

1. THE BOTTLENECK TEST:

Is the thing I'm hiring for a documented

bottleneck — a specific task consuming

more than 3 hours per week that I

can measure — or a vague sense of

being overwhelmed?

Bottleneck = hire may be justified.

Vague overwhelm = fix systems first.

2. THE AI-FIRST TEST:

Have I genuinely deployed AI for

this function and measured the result?

Not "I tried it once" — actually built

the workflow and ran it for 2+ weeks?

If no: do that first.

Most solo founder hiring impulses

are AI-addressable without a hire.

3. THE SPECIFICITY TEST:

Can I write a one-page job spec for

this role RIGHT NOW — with specific

deliverables, quality criteria,

and a success metric for week four?

If not: the role isn't ready to hire for.

Vague roles produce vague contractors.

4. THE MANAGEMENT OVERHEAD TEST:

Managing this person well requires

approximately 3-5 hours per week

(briefing, reviewing, feedback, coordination).

Do I have 3-5 hours to give?

If not: I'm hiring someone to create

more work for me, not less.

5. THE REVERSIBILITY TEST:

If this doesn't work out in 60 days,

what does unwinding it cost?

(Contractor = low cost.

Employee = significant cost.

Strategic partner = relationship cost.)

Is the potential upside worth

that unwind cost?

VERDICT OPTIONS:

HIRE NOW — bottleneck documented, AI tested

and insufficient, spec ready,

capacity exists to manage

HIRE IN 30 DAYS — right decision but

spec not ready or AI not yet tested

DELAY — try AI-first for 30 days,

reassess with data

DON'T HIRE — system problem not capacity

problem, or can't afford management overhead

Output: Verdict + the one thing that

most needs to be true before proceeding.

The emotion check:

Before running this prompt, name why you're considering this hire. One honest sentence. If the sentence contains "overwhelmed," "stressed," "can't keep up," or "burnt out" — this is an emotion-driven decision, not an evidence-driven one. Emotion-driven hires solve the feeling of overwhelm while often adding management overhead that compounds it.

The prompt's verdict of "DELAY — try AI-first for 30 days" exists specifically for this case. Run the AI workflow for 30 days. Measure actual time saved. If the gap is still there after AI is genuinely deployed, hire into the gap — not into the anxiety.

Template 3: The Pivot/Persist Decision

The highest-stakes recurring decision: something isn't working, or a different direction looks more promising, and you're deciding whether to stay or change. Pivot decisions are where both failure modes operate simultaneously — moving too quickly out of panic, and moving too slowly out of sunk cost attachment.

The kill vs. persist framework has four outcomes worth knowing before running any pivot prompt: kill when both learning and metrics fail (no product-market fit signal). Persist when both succeed (clear traction). Pivot when learning succeeds but metrics fail (right customer, wrong solution). Persevere when metrics succeed but learning plateaus (optimization phase, not pivot phase). The prompt below operationalizes this framework.

Should I pivot or persist?

THE CURRENT DIRECTION:

[What you're currently doing —

product, channel, ICP, or strategy

you're evaluating]

HOW LONG YOU'VE BEEN HERE:

[Weeks / months — be precise]

THE EVIDENCE FOR PERSISTING:

[What's working — include actual metrics,

not feelings]

THE EVIDENCE FOR PIVOTING:

[What's not working — include actual metrics,

not feelings]

WHAT A PIVOT WOULD MEAN:

[What specifically would change?

This isn't rhetorical —

name the new direction,

not just "something different"]

Run the PIVOT/PERSIST test:

1. THE LEARNING TEST:

Are you still learning things that

improve your understanding of the

problem, customer, or solution?

Learning = you're in the right space,

even if the metrics aren't there yet.

No learning = the current direction

has hit its information ceiling.

2. THE METRICS TEST:

Are your key metrics — retention,

conversion, active usage — improving,

flat, or declining over the last 8 weeks?

Improving = persist.

Flat for 8+ weeks despite effort =

strong signal to pivot.

Declining = urgent signal.

3. THE SUNK COST CHECK:

If you had built nothing yet and were

evaluating this direction fresh today

with what you now know about the market,

would you start here?

Yes = persist.

No = sunk cost may be keeping you here.

4. THE PIVOT CLARITY TEST:

Is the pivot direction specific enough

to be a real direction?

("Different customers" is not specific.

"Freelance designers instead of

marketing agencies" is specific.)

If you can't name the new direction

precisely, you're not ready to pivot —

you're ready to explore.

5. THE MINIMUM TEST:

What's the smallest experiment that

would tell you in 30 days whether

the pivot direction has more signal

than the current one?

A pivot should start as a test,

not a full commitment.

VERDICT:

PERSIST — learning active, metrics improving

or early, sunk cost check passes

OPTIMISE — metrics working but growth plateaued,

in optimization not pivot phase

TEST THE PIVOT — specific direction exists,

minimum experiment definable,

worth 30-day parallel test

COMMIT TO PIVOT — clear signal,

sunk cost check fails, direction specific

EXIT — learning stopped, metrics declining,

pivot direction unclear:

pause before investing more

Output: Verdict + what one data point

in the next 30 days would change it.

The "explore first" rule for pivots:

Pivots committed to before the new direction is tested produce a different version of the same problem. The minimum test step in the prompt exists to enforce a specific sequence: define the experiment → run it for 30 days → read the signal → commit or return. Skipping to commitment before the experiment is how founders end up three months into a pivot direction that has less signal than what they left.

The Decision Log: Getting Smarter Over Time

The three prompts above improve any individual decision. The decision log is what makes decision-making improve as a capability over time.

Most founders make a decision, move on, and reconstruct the same reasoning process six months later for a similar decision — drawing on memory that has been distorted by outcome (if it worked, the reasoning was good; if it didn't, the reasoning was flawed, regardless of whether that's actually true). The log captures reasoning at decision time, before outcome is known, so you can compare what you thought would happen against what actually happened.

The log entry format (5 minutes per decision):

Keep this in a single Notion database. One row per significant decision.

DECISION LOG ENTRY

DATE: [Today]

CATEGORY: Build-Add-Defer / People / Pivot-Persist

DECISION: [One sentence — what you decided]

TEMPLATE VERDICT: [What the AI prompt returned]

YOUR ACTUAL DECISION: [What you decided,

and if different from verdict, why]

CONFIDENCE: 1-5 (1 = very uncertain, 5 = clear)

REVERSIBILITY: Easy / Moderate / Hard

WHAT YOU EXPECT TO HAPPEN:

[One paragraph — what outcome are

you expecting and on what timeline?]

BIGGEST RISK:

[The one thing most likely to make

this decision wrong]

--- 30 DAYS LATER ---

EARLY SIGNAL: [What's happening]

ON TRACK: Yes / No / Too early

--- 90 DAYS LATER ---

OUTCOME: [What actually happened]

VS EXPECTATION: Better / As expected / Worse

BIGGEST RISK REALISED: Yes / No / Differently

ONE LESSON: [Single sentence —

the reusable insight]

The quarterly log review prompt:

Review my decision log for patterns.

[Paste all entries from the last quarter —

fill in all fields including outcomes]

Analyze:

1. ACCURACY: When I expected a good outcome,

how often did I get one?

Am I well-calibrated or systematically

over- or under-confident?

2. CATEGORY PATTERNS: Which decision category

do I handle best?

Which consistently produces worse outcomes

than expected?

3. VERDICT ADHERENCE: When I overrode the

AI prompt verdict, was I right to?

Pattern: override + good outcome =

my judgment is better than the template here

Override + bad outcome =

I'm rationalizing, not reasoning

4. RISK RECOGNITION: Did I correctly identify

the biggest risk before the decision?

Or did I consistently miss the actual

failure mode?

5. ONE CHANGE: What single change to

my decision process would most improve

future outcomes based on this quarter?

Output: 3-4 sentences per section.

The pattern I most need to see.

The quarterly review compounds over time. After two or three quarters, you have enough data to see your actual decision profile — the category you systematically misread, the confidence level at which you're well-calibrated versus overconfident, the specific risk types you consistently miss. That self-knowledge is the most valuable thing the decision log produces.

The Decision Batching Habit

Decision fatigue is real: decision quality deteriorates as the day progresses, and solo founders face hundreds of choices daily — from which task deserves attention first to whether a feature request justifies a sprint. Each choice chips away at cognitive bandwidth regardless of its size.

The antidote isn't making fewer decisions. It's batching them.

Set aside one hour per week — the same day, the same time, every week — for non-urgent decisions. Any decision that's medium-stakes and reversible within 30 days goes on a list throughout the week. The list gets processed in the batching session using whichever template applies.

What doesn't go in the batch: urgent decisions with a real deadline, any decision where waiting 7 days causes genuine cost, and decisions small enough that a framework adds more overhead than the decision itself.

What does go in the batch: feature prioritization, contractor evaluation, channel tests, pricing experiments, tool evaluations, partnership enquiries. Most medium-stakes decisions aren't actually urgent — they feel urgent because they're unresolved, not because a clock is actually running.

The batching session also has one rule: no decision made in the last 10 minutes of the session. Decision quality at the end of a focused hour drops meaningfully. If you haven't decided by minute 50, add it to next week's batch. You haven't lost anything. You've given it one more week of passive processing, which often produces clarity the batching session couldn't.

The Defaults: Pre-Made Decisions for Recurring Calls

The most underused decision tool for solo founders is the pre-made default — a standing rule for a specific recurring decision type that removes the decision from the queue entirely.

Defaults work for decisions that:

Repeat in the same form regularly

Have a clearly better answer given your current stage

Don't require fresh context each time

Help me build a set of personal decision defaults.

MY STAGE: [Pre-revenue / $0-5K MRR /

$5-20K MRR / Growing]

MY CONSTRAINTS: Solo, [X] hours/week,

$[X] monthly budget

Generate standing defaults for these

recurring decision types:

1. FEATURE REQUESTS:

"We only build a feature when..."

[Stage-appropriate threshold]

2. TOOL PURCHASES:

"We only buy a new tool when..."

[Criteria: bottleneck exists,

free tier insufficient, etc.]

3. CONTRACTOR WORK:

"We only hire for a task when..."

[Time threshold, AI-first test, etc.]

4. CONTENT INVESTMENT:

"We only invest in a new content

channel when..."

[Criteria for adding vs. staying focused]

5. PARTNERSHIP ENQUIRIES:

"We only pursue a partnership when..."

[Minimum criteria for taking a meeting]

6. PRICE CHANGES:

"We only change pricing when..."

[Evidence threshold before adjusting]

Each default should be:

- Specific enough to produce a yes/no answer

- Stage-appropriate (not aspirational

for a future version of the business)

- Revisable quarterly as stage changes

Output: 6 decision defaults I can paste

into my Notion strategy page and

reference before making each call.

A founder with six standing defaults removes six recurring decision types from the weekly cognitive load entirely. The feature request that would normally generate an hour of anxious deliberation gets answered in 30 seconds: does it meet the default? Yes or no. Next.

Common Mistakes

1. Using AI to reach a predetermined conclusion

The most common misuse of decision prompts: describing the situation in a way that guarantees the verdict you've already chosen. AI pattern-matches to the framing you provide. If you describe the evidence for building and omit the evidence against, the verdict is BUILD — which is useless. The templates require both the evidence for and the evidence against, explicitly, before generating a verdict. Honest inputs are the only inputs that produce useful outputs.

2. Applying the wrong template

A pivot decision run through the Build/Add/Defer template produces a build verdict on a direction question — which misses the point entirely. The triage test exists because the wrong framework is worse than no framework. Route carefully before running.

3. Logging decisions but never reviewing

A decision log with no quarterly review is a diary. The pattern recognition happens at the review, not at the logging. Founders who log but don't review get the discipline benefit without the compounding intelligence benefit. The quarterly prompt takes 20 minutes. Run it.

4. Overriding the verdict without naming why

When you override what the AI prompt returned and do something different, log the override and the reason. If you override correctly (your judgment was better than the template), you learn something about where your judgment is strong. If you override incorrectly, you learn something about where you rationalize rather than reason. Without logging the override, you lose both lessons.

5. Treating medium-stakes decisions as urgent

The batching habit exists because most medium-stakes decisions aren't actually urgent — they feel urgent because they're unresolved. Moving a decision from "I need to decide this now" to "this goes in Thursday's batch" rarely costs anything and frequently produces better outcomes because the passive processing week provides clarity the hot-decision moment couldn't.

The Real Talk on Decision Systems

Solo founders move too slowly out of fear, or too quickly out of panic — because the cognitive load of deciding alone, without a co-founder's pressure-testing, without a board's external perspective, produces both paralysis and reactivity depending on the emotional weather of the day.

The templates in this article don't replace judgment. They structure it. The Build/Add/Defer prompt doesn't tell you what to build — it forces you to articulate whether the problem is validated, whether it's revenue-connected, and what you're giving up. That articulation is what separates a decision from a guess.

The decision log doesn't make you smarter immediately. It makes you smarter in a compounding way — each quarter's review adding a layer of genuine self-knowledge about how your judgment actually works, not how you think it works.

One template. One log entry. One quarterly review.

That's it.

Comments (0)

Leave a Comment