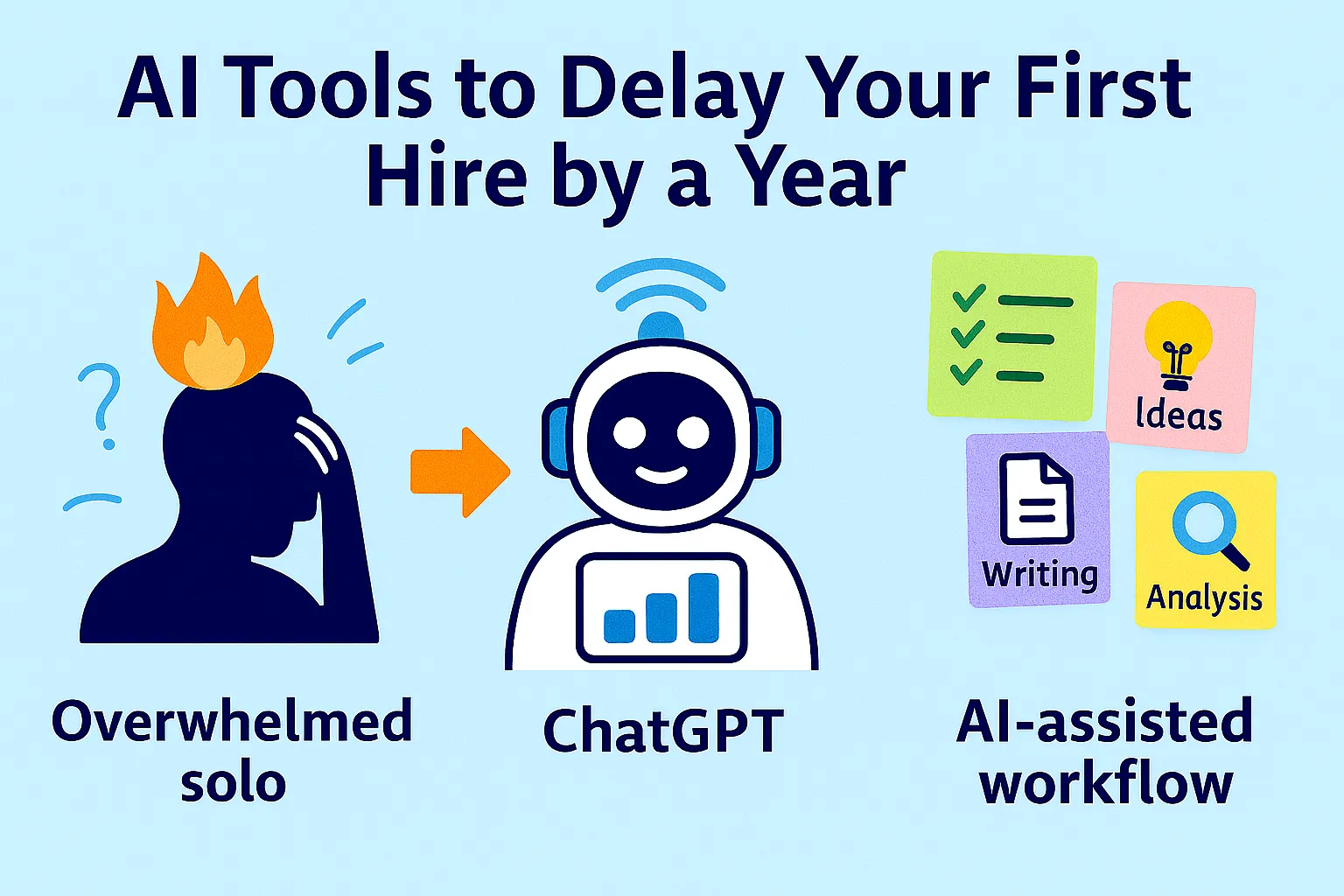

The pressure to hire arrives earlier than it should.

You're at $5K MRR. Things feel busy. You're context-switching constantly. A voice in your head says: if I just had someone to help with X, I could focus on what matters. X is usually something like customer support, or content, or operations. Something real. Something that genuinely takes time.

So you hire. A part-time VA at $1,500/month. Or a contractor for specific projects. Or, if funding allows, a first full-time employee at $60-80K/year.

Six months later, a significant portion of founders who hired early regret it — not because the person was wrong, but because the timing was. The overhead of managing someone, onboarding them, maintaining quality, handling the relationship, recalibrating when the work changes — that overhead arrives whether or not the business was ready for it. You traded one kind of busy for a more complex kind of busy, and the core problem (too many tasks competing for your attention) didn't go away.

The question worth asking before you hire: is this a capacity problem, or a system problem?

A capacity problem requires more hands. A system problem requires better systems. Hiring to solve a system problem doesn't fix the system — it just delegates the chaos to someone else, at significant cost.

Most early-stage solo founders have system problems, not capacity problems. The tasks piling up aren't piling up because there aren't enough hours — they're piling up because there's no structured workflow capturing and processing them. AI doesn't add hours; it adds systems. And at $5-15K MRR, systems are usually what's actually missing.

This article is the honest answer to the hiring question: what AI can safely cover, what it genuinely can't, and the exact cost math that makes the timing decision clear.

The Real Cost of Hiring Too Early

Before the AI comparison, name what early hiring actually costs — because most founders calculate salary and stop there.

The direct costs:

Part-time VA (10-20 hrs/week): $800-2,000/month

Part-time contractor (specialized): $1,500-4,000/month

First full-time employee (US, entry-level): $45-65K/year = $3,750-5,400/month plus benefits, payroll taxes (~25-30% on top), equipment, software licenses

First full-time employee (total loaded cost): $56-85K/year = $4,700-7,100/month

The hidden costs most founders miss:

Management overhead: Managing one person well takes 3-5 hours per week minimum — regular check-ins, feedback, context-setting, quality review. That's time that comes directly out of your building-and-selling hours.

Onboarding time: A new hire takes 30-90 days to reach full productivity. During that period, you're doing the work and explaining the work. Net capacity often goes negative in the first month.

Scope instability: At early stage, the work changes constantly. What you hired someone to do in January may be 60% different by April. Managing that transition — retraining, redirecting, handling frustration — creates friction that compounds.

Hiring mistakes: The wrong hire at early stage doesn't just cost salary. It costs the months spent realizing it's wrong, the time managing the situation, and the time to find a replacement. First-hire failure rates are meaningfully high even for experienced founders.

What 12 more months of AI-only operation preserves:

$15,000-50,000 in avoided labor costs (depending on what you would have hired)

3-5 hours per week of management overhead redirected to growth

Full strategic flexibility (you can pivot without a human's role being affected)

Compounding equity (every dollar not spent on salary is retained ownership)

The question isn't "can I afford to hire?" It's "can I afford not to delay?"

The Task Audit: What's Actually on Your Plate

The decision to hire or not hire starts with an honest inventory. Not "what feels overwhelming" but "what specific tasks are consuming time that shouldn't be consuming it?"

Run this audit before making any decision:

Help me audit my weekly tasks to identify what can be

handled by AI vs. what genuinely requires human hiring.

Here are the tasks I do regularly:

[List everything you do in a typical week —

be specific: "write and send client update emails"

not "communication"]

For each task, categorize:

CATEGORY A — AI READY:

Structured, repeatable, follows clear rules,

output quality is verifiable, no relationship

context required.

→ These can be handed to AI today.

CATEGORY B — AI ASSISTED:

Requires judgment but has a repeatable framework.

AI produces first draft or analysis; human reviews

and approves.

→ These require you but AI cuts time by 60-80%.

CATEGORY C — HUMAN REQUIRED:

Requires genuine relationship context,

emotional intelligence, creative judgment,

or strategic decision-making that AI cannot replicate.

→ These justify hiring or remain your responsibility.

CATEGORY D — SHOULD BE ELIMINATED:

Tasks that exist because of missing systems,

not genuine business need.

(e.g., manually compiling reports that

should be automated; chasing information

that should be in a database)

→ Fix the system, don't hire for the symptom.

For each task: assign category, estimate hours per week,

and for Category A/B — suggest the specific AI tool

or workflow that handles it.

Output: Ranked list of highest-ROI automation targets

(Category A tasks consuming the most time).

Most founders who run this audit discover that 40-60% of their weekly tasks fall in Category A or B — automatable today, or AI-assisted with minimal oversight. The remaining 40-60% is often split between genuinely human-required work and Category D tasks that should be eliminated, not hired for.

What AI Handles Better Than a Junior Hire

This is the specific claim this article needs to defend. Not "AI is useful" — but "for these specific task categories, AI at $50-150/month outperforms a junior hire at $3,500-5,000/month."

Operations and Admin

What AI handles completely:

Email triage and labeling (Zapier + AI step)

Calendar management and meeting confirmations (Calendly + Zapier)

File organization and naming (Make + OpenAI)

Weekly metrics compilation and reporting (Zapier + AI + your email)

Invoice creation and payment follow-ups (Stripe + PandaDoc + Zapier)

Contract generation from templates (PandaDoc + template variables)

Client onboarding sequences (Zapier multi-step trigger)

SOP creation from Loom recordings (Loom AI + structured prompts)

Meeting summaries and action item extraction (Fathom + Notion)

Task extraction from emails and voice notes (Zapier + AI)

Honest ceiling: A fully configured operations automation stack handles 70-80% of what an ops VA does — the structured, repeatable, rule-based portion. What it doesn't handle: judgment calls that require context ("should I reschedule this client meeting or push back?"), relationship nuance ("this client is frustrated, how should I respond?"), and truly novel situations that fall outside any documented workflow.

Cost comparison for ops/admin:

Junior VA (10 hrs/week, $20/hr): $800-1,000/month

AI ops stack (Zapier Starter + Make + OpenAI API): $55-80/month

Savings: $720-940/month, or $8,640-11,280/year

The VA handles 100% of tasks. The AI stack handles 70-80% and routes the remaining 20-30% to you — which typically represents 1-2 hours of your week instead of the 10 hours you'd spend doing it all manually.

Customer Support

What AI handles completely:

FAQ responses for documented issues

Password reset and account access guidance

Standard billing questions

How-to questions covered by your documentation

Routing and categorizing incoming tickets

First-response acknowledgments

What AI handles partially (AI draft, you review):

Complaints requiring empathy and judgment

Bug reports with ambiguous reproduction steps

Requests for features or exceptions

Churning customers wanting to cancel

What requires a human:

Escalated complaints from high-value accounts

Relationship-sensitive conversations

Situations requiring negotiation or policy exceptions

Any customer showing repeated frustration

Honest ceiling: A well-configured AI support setup resolves 50-65% of tickets fully. For a product with clear, documented answers to common questions, the ceiling approaches 70%. For complex or highly variable products, it may sit at 40-50%. This is not a failure — 60% deflection on 100 tickets/month is 60 tickets you don't write. At 5 minutes per ticket, that's 5 hours returned to you monthly.

Cost comparison for support:

Part-time support contractor (5 hrs/week, $20/hr): $400-500/month

AI support stack (Help Scout $20 + knowledge base setup): $20/month + one-time 4-hour setup

Savings: $380-480/month, or $4,560-5,760/year

Caveat: below 20 tickets/week, AI support tooling is overkill. Handle support personally via email until volume justifies the setup. Above 20 tickets/week, the ROI is immediate.

Basic Marketing and Content

What AI handles completely:

First drafts of blog posts, emails, social posts

Content repurposing (blog → LinkedIn → newsletter → Twitter/X)

Email subject line generation and testing

Ad copy variations for testing

SEO meta descriptions and title tags

Newsletter compilation from existing content

Basic competitor monitoring and summarization

What AI handles partially:

Content strategy decisions (AI generates options, you choose)

Thought leadership pieces (AI drafts, your perspective shapes it)

Community engagement (AI drafts responses, you approve)

What requires a human:

Relationship-based outreach and partnerships

High-stakes brand positioning decisions

Original insight and perspective (the voice that makes content shareable)

Community trust and authentic engagement at scale

Honest ceiling: AI produces content at roughly 70-80% of what a good content writer produces — faster, cheaper, infinitely consistent, but missing the specific lived-experience insight and distinctive voice that makes exceptional content stand out. For most bootstrapped founders at early stage, 70-80% quality at $0 incremental cost beats 95% quality at $2,000/month.

Cost comparison for content:

Part-time content writer (5-10 hrs/week, $40-60/hr): $800-2,400/month

AI content stack (Claude Pro/ChatGPT Plus + your 30-60 min/week editing): $20/month

Savings: $780-2,380/month, or $9,360-28,560/year

This is the highest-leverage AI substitution for most content-driven businesses. A part-time content writer at $1,500/month costs more annually ($18,000) than most bootstrapped founders' entire operating budget.

Research and Analysis

What AI handles completely:

Competitive pricing research and normalization

Customer review mining and synthesis

Market trend summaries from public data

Weekly metrics interpretation and briefing

ICP profiling from customer data

Community signal scanning and synthesis

What AI handles partially:

Strategic recommendations (AI generates options, you evaluate)

Qualitative customer research synthesis (AI analyzes, you interpret)

What requires a human:

Primary customer interviews

Nuanced judgment about ambiguous signals

Relationships with industry contacts and experts

Cost comparison for research:

Part-time research analyst (5 hrs/week, $30/hr): $600/month

AI research stack (Claude Pro or ChatGPT Plus, already in your stack): $0 marginal cost

Savings: $600/month, or $7,200/year

The Full Cost Comparison

Here's the complete picture: AI stack vs. a part-time VA vs. a first full-time employee, across the functions they cover.

Annual AI Stack (covers ops + support + content + research):

Claude Pro or ChatGPT Plus: $240/year

Zapier Starter: $348/year

Make free tier: $0

Help Scout (support): $240/year

Fathom (meetings): $0

PandaDoc Starter: $420/year

Loom Starter: $150/year

Plausible (analytics): $108/year

Total AI stack: ~$1,500/year ($125/month)

Coverage: 65-75% of functions a junior hire would cover

Part-Time VA (20 hrs/week, $20-25/hr):

Salary: $20,800-26,000/year

No benefits (contractor): $0

Management overhead (yours): ~150 hrs/year × your hourly value

Onboarding time: ~40 hours first month

Total cost: $22,000-28,000/year

Coverage: 80-90% of functions, with relationship context

First Full-Time Employee (US, entry-level):

Base salary: $45,000-60,000/year

Payroll taxes (~15%): $6,750-9,000/year

Benefits (health, PTO, etc.): $5,000-12,000/year

Equipment + software: $2,000-4,000/year

Management overhead (yours): ~250 hrs/year × your hourly value

Total loaded cost: $58,750-85,000/year

Coverage: 90-100% of functions, including judgment calls

The ROI math:

At $5K MRR ($60K ARR), a first full-time employee at $70K loaded cost represents 117% of annual revenue. That's not a hire — it's a bet the company.

At $15K MRR ($180K ARR), that same hire represents 39% of revenue. Still significant, but approaching defensible.

At $25K MRR ($300K ARR), the math changes entirely — the hire pays for itself if it enables $70K+ in additional revenue generation.

The AI stack at $1,500/year represents 2.5% of $60K ARR. The coverage is narrower. The management overhead is lower. The strategic flexibility is greater.

The honest comparison: For most solo founders under $15K MRR, the AI stack covers enough — not everything, but enough — that the 12-month delay on hiring preserves $20,000-80,000 in cash and optionality while systems are built and product-market fit is confirmed.

When AI Is Genuinely Not Enough

The previous sections made the case for AI. Now the honest counter-case — because 55% of employers who laid off for AI report regretting it, and the lesson cuts both ways: replacing humans with AI before you understand what you're losing creates its own problems.

When you actually need a human:

1. When the task requires accumulated relationship context

A VA who's worked with you for six months knows that Client A communicates passive-aggressively and needs direct reassurance, that Contractor B needs deadlines framed as priorities not deadlines, and that Prospect C is almost ready to buy but gets spooked by urgency. AI doesn't accumulate this context across months of real interactions. When relationship nuance is the primary value of the task, you need a human.

2. When quality variance is catastrophic, not inconvenient

AI makes mistakes. In most contexts, mistakes are caught and corrected. In some contexts — legal documents with binding implications, financial communications, public statements on sensitive topics, anything where an error could damage a key relationship — the cost of a mistake is high enough that human oversight isn't optional. Don't AI-automate anything where a 3% error rate has disproportionate consequences.

3. When the task requires genuine creative judgment that you can't train prompts to replicate

AI produces excellent average-quality creative work. It struggles with the distinctive, unexpected, perspective-driven decisions that make exceptional work. If your competitive advantage is your distinctive creative voice — in writing, in design, in product vision — AI assists but doesn't replace the judgment calls that produce that distinctiveness.

4. When volume exceeds what you can reasonably review

The 30-40% of AI output that requires human review is only manageable if that 30-40% fits within your available review time. At low ticket volume (20/week), reviewing escalated support tickets takes 20 minutes. At 200 tickets/week with 40% escalation, you have 80 tickets to review — which is itself a full-time job. Volume thresholds matter. AI delays hiring; it doesn't prevent it forever.

5. When you've hit the "complexity ceiling"

AI handles tasks that can be described as workflows. When the work requires synthesizing ambiguous information across multiple systems, making judgment calls without clear rules, or navigating genuinely novel situations — AI hits its ceiling. The more complex and judgment-intensive your operations become, the more the ceiling matters.

The honest threshold:

AI is enough when: tasks are structured and repeatable, mistakes are recoverable, quality at 70-80% is sufficient, and volume is within your review capacity.

A human is needed when: tasks require accumulated relationship context, mistakes are expensive, quality requirements are 90%+, or volume has outgrown your review capacity.

Most solo founders hit the human threshold somewhere between $15K-25K MRR, when the volume and complexity of operations exceeds what AI can handle within the founder's available oversight time.

The 12-Month Hiring Delay Roadmap

This isn't "never hire." It's "don't hire until AI has been genuinely tested and found insufficient." Here's what the year looks like:

Months 1-2: Audit and automate Run the task audit. Identify top Category A/B tasks. Build the core automation stack (Zapier, Make, AI workflows for the highest-volume repeatable tasks). Track hours saved weekly.

Months 3-4: Tune and measure Review output quality. Fix the workflows that underperform. Build the knowledge base that lifts AI support quality. Measure: how many hours did you recover? What tasks are still consuming time they shouldn't?

Months 5-6: Identify the gaps After 4-6 months of AI-assisted operation, the genuine gaps become clear — not the tasks you assumed would be problems, but the ones that actually remained problems after AI was deployed. These gaps are your real hiring target.

Months 7-9: Test the gap with a project hire Before committing to ongoing employment, hire a contractor for a specific project that addresses the genuine gap. A 60-day engagement with a skilled contractor shows you what value a human adds in this specific context, what management overhead that creates, and whether the ROI justifies ongoing cost.

Months 10-12: Decide with data By month 10, you have six months of AI performance data and 60 days of human comparison data. The hiring decision stops being a feeling ("I'm overwhelmed") and becomes a comparison ("AI handles 70% of support at $20/month, a human adds relationship context on the remaining 30% at $600/month — is that relationship context worth $7,200/year given our current churn rate?").

That's how the hiring decision should be made. Not by feeling overwhelmed at $5K MRR, but by comparing verified AI performance against verified human value at a stage where the business can bear the cost.

The Hire Readiness Checklist

Before making any hiring decision, answer these ten questions. If you can't answer them all confidently, you're not ready to hire — you're overwhelmed, which is solvable differently.

Run a hiring readiness assessment for my business.

MY CURRENT STATE:

MRR: $[X]

Primary bottleneck: [What's consuming too much time]

AI stack currently in place: [List current tools]

Tasks I'm considering hiring for: [List]

Answer these questions:

1. Is this a capacity problem (not enough hours) or

a system problem (no workflow for this task)?

If system problem: What's the workflow fix?

2. Have I deployed AI for this function and measured

the result? If yes: What did AI cover and what remained?

If no: Why is hiring being considered before AI is tested?

3. What specific output or outcome am I hiring for?

(Not "help with marketing" — a specific, measurable deliverable)

4. Can I clearly describe the role in a one-page job spec

today, without figuring it out as I go?

If no: The role isn't ready to hire for.

5. At current MRR, what percentage of annual revenue

does this hire cost (salary + loaded costs)?

If >20%: The business may not be ready for this hire.

6. What is the management overhead of this hire?

Can I genuinely allocate 3-5 hrs/week to managing well?

7. Have I tested the work with a project-based contractor

before committing to ongoing cost?

8. What happens if this hire doesn't work out in 90 days?

Is the business financially resilient enough to absorb

the mistake?

9. What specifically will this hire make possible that

is not possible without them?

Is that thing worth the total cost?

10. Am I hiring to solve a problem or to reduce anxiety?

(Both are understandable. Only one justifies the cost.)

OUTPUT: Hire / Delay / Fix the system first

With specific reasoning for each question that didn't

clearly pass.

The tenth question is the most important and the most uncomfortable. A significant proportion of solo founder hiring decisions are anxiety-relief purchases, not business investments. The feeling that having help would reduce stress is real. It's not the same as the business needing the hire.

The Right Order of Operations

Here's how this article's advice connects to the rest of the series:

First: Build the AI stack (tools and infrastructure) Second: Design the AI team (roles and workflows) Third: Run the systems for 3-6 months Fourth: Audit genuine gaps — tasks AI cannot cover at your required quality level Fifth: Test with project-based contractors for the specific gaps identified Sixth: Make the ongoing employment decision based on verified data

Hiring before step three is a guess. Hiring after step five is a decision.

The 12-month delay isn't about avoiding people. It's about arriving at the hiring decision with enough data to make it correctly — knowing exactly what you're hiring for, what the AI will continue to cover, and what the loaded cost of the hire produces in return.

One solo founder runs an entire company using 15 AI agents with zero employees. That's one end of the spectrum. Most bootstrapped founders will eventually hire — and should, when the business demands it. The goal isn't to never hire. It's to hire later, leaner, and with much more clarity about what you're actually buying.

That clarity costs 12 months of honest AI deployment.

It's worth it.

That's it.

Comments (0)

Leave a Comment